Claude 4.7 Just Launched But Can Open-Source Models Do the Same Work for Less on Dume Cowork?

Team Dume.ai

• • 11 min read

Anthropic dropped Claude 4.7 this week, and the AI world is buzzing. And for good reason — it's a genuinely impressive model. But at $25 per million output tokens, it's also genuinely expensive. For teams running hundreds of automated tasks a day, those costs stack up fast.

Here's the thing most people aren't talking about: the AI model powering your workflows doesn't have to be the most expensive one to get the job done. The agentic AI market hit $10.91 billion in 2026 (OneReach.ai, 2026), and a new wave of open-source models — Kimi K2.5, MiniMax M2.7, and others — are now matching frontier performance at a fraction of the price.

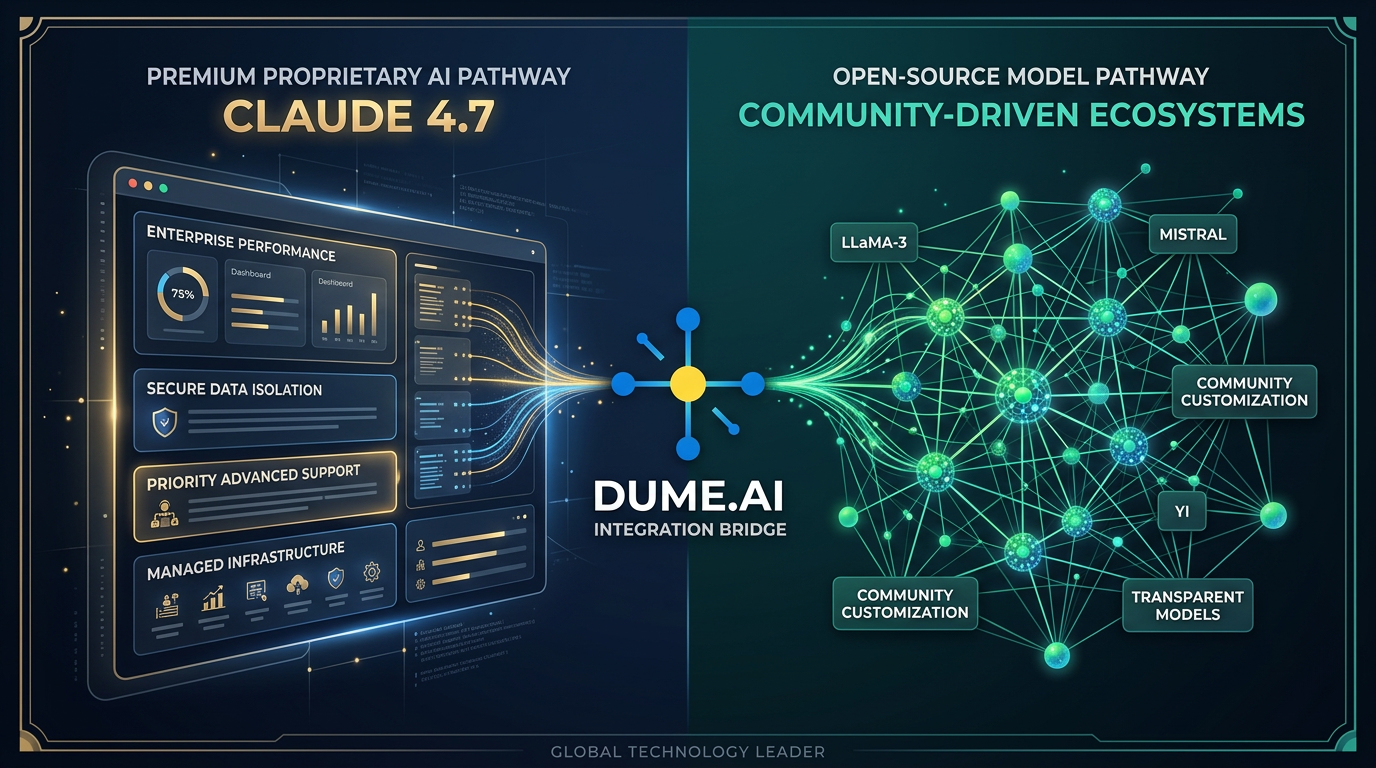

Dume Cowork, the AI-powered desktop app, lets non-technical professionals run the same powerful automations using either premium models like Claude 4.7 or cost-effective open-source alternatives like Kimi K2.5 and MiniMax — all without writing a single line of code.

This post breaks down exactly what changed with Claude 4.7, what open-source models can realistically replace, and how Dume Cowork lets you pick the right tool for the right task.

Key Takeaways

- Claude 4.7 costs $5/M input and $25/M output tokens — up to 83x more expensive than MiniMax M2.7 ($0.30/$1.20) (AI/ML API, 2026)

- Open-source models now show 51% ROI vs 41% for proprietary alternatives, with MMLU benchmark gaps narrowed to just 0.3 points (SWFTE, 2026)

- Dume Cowork supports Kimi K2.5, MiniMax, and multiple open-source models natively — no API configuration required for non-technical users

- Smart model routing (right model for the right task) can cut monthly AI costs by 85-90% without sacrificing output quality

What Did Claude 4.7 Actually Change?

Claude 4.7 is Anthropic's latest model, released April 16, 2026. It's their strongest release yet for agentic tasks — the kind of autonomous, multi-step work that powers tools like Dume Cowork. Here's what's genuinely new:

Vision resolution got a major upgrade. Claude 4.7 processes images at up to 2,576px on the long edge — three times what previous models could handle. For tasks involving analyzing dense spreadsheets, reading complex PDFs, or reviewing design mockups, this matters.

The "xhigh" effort level is new. Anthropic introduced a new reasoning control level that lets the model spend more compute on hard problems. Think of it as a "think harder" switch for complex analytical tasks.

Task budgets landed in public beta. This lets developers and power users set cost or compute limits on automated runs — a sign that Anthropic knows cost management is top of mind for its customers.

The catch? All of this comes at $5 per million input tokens and $25 per million output tokens. For a small business running daily document processing, email drafting, and report generation, that's a real line item.

Our take: Claude 4.7's improvements are real and meaningful — but they're concentrated in areas like software engineering, high-resolution vision, and complex reasoning chains. For the bread-and-butter tasks most non-technical professionals do in Dume Cowork (summarizing reports, drafting emails, building presentations, organizing files), the performance gap with open-source models has nearly closed.

How Expensive Is Claude 4.7 Compared to Open-Source Models?

The pricing gap between frontier and open-source models is staggering right now. Open-source alternatives like Kimi K2.5 cost $0.60 per million input tokens and $2.50 per million output tokens — roughly 10x cheaper than Claude 4.7 (NXCode, 2026). MiniMax M2.7 goes even further at $0.30/$1.20 — that's 83x cheaper on outputs.

Let's make this concrete. Say your team uses an AI assistant for 500 documents a month — each requiring roughly 2,000 input tokens and 1,000 output tokens:

Monthly AI Cost: 500 Documents (2K in / 1K out tokens each)Claude 4.7$17.50/month($5 + $25/M tokens)Kimi K2.5$1.85/month($0.60 + $2.50/M tokens) — 90% lessMiniMax$0.75/mo($0.30 + $1.20/M tokens) — 96% less

Source: Anthropic, NXCode, AI/ML API (2026)

That's a monthly cost of $17.50 with Claude 4.7, $1.85 with Kimi K2.5, or $0.75 with MiniMax M2.7 — for the same volume of work.

According to a 2026 analysis by SWFTE, enterprises using open-source models report a 51% ROI rate compared to 41% for proprietary models (SWFTE, 2026). The cost-effectiveness advantage isn't marginal — it's structural.

[INTERNAL-LINK: how to switch models in Dume Cowork → settings / model configuration guide]

What Is Kimi K2.5 and Why Does It Matter for Dume Cowork Users?

Kimi K2.5, built by Moonshot AI and released January 27, 2026, is one of the most capable open-source agentic models available today. It runs on a Modified MIT license — meaning it's free to use, modify, and build on for most purposes.

What makes it genuinely competitive? Kimi K2.5 scored 50.2% on Humanity's Last Exam — a benchmark designed to be nearly impossible — at 76% lower cost than Claude Opus 4.7. On the BrowseComp benchmark (which tests web browsing and research tasks), it outperformed Claude, scoring 74.9% vs 59.2%.

Here's what that translates to in everyday Dume Cowork tasks:

For research and summarization: Kimi K2.5's 256,000-token context window means it can ingest entire reports, contracts, or datasets in one pass. Most users running Dume Cowork for document analysis won't hit that limit.

For parallel workflows: Its Agent Swarm technology can spawn up to 100 specialized sub-agents running in parallel. In practice, this means complex multi-step workflows — like researching competitors, drafting a report, and formatting it — can run 4.5x faster.

For everyday writing tasks: On standard language tasks (emails, summaries, structured content), Kimi K2.5 performs comparably to models costing 5-6x more.

What we've seen: When Dume Cowork users switch from a premium model to Kimi K2.5 for document-heavy workflows, the output quality on straightforward tasks is indistinguishable. The savings become meaningful almost immediately for teams doing more than a few hundred operations per week.

[IMAGE PROMPT: A futuristic visualization of Kimi K2.5's Agent Swarm — dozens of glowing interconnected nodes branching out from a central hub, each node representing a specialized AI sub-agent. Green and teal color palette, dark navy background, data-flow lines connecting the nodes, modern tech illustration style, 1200x630px wide banner]

What Is MiniMax and How Does It Compare?

MiniMax is a Chinese AI lab producing some of the most cost-effective frontier-grade models available. Their latest model, MiniMax M2.7, is built specifically with agentic feedback loops in training — meaning it's tuned for exactly the kind of autonomous task execution Dume Cowork runs.

The numbers are striking: MiniMax M2.7 costs $0.30 per million input tokens and $1.20 per million output tokens, runs at approximately 100 tokens per second, and has outperformed Claude Opus 4.6 on SWE-Pro benchmarks (AI/ML API, 2026). Their M2 model costs just 8% of Claude Sonnet while running 2x faster.

Is MiniMax right for every task? Not necessarily. But for the majority of what non-technical Dume Cowork users do daily — drafting emails, creating presentations, organizing files, generating reports — MiniMax M2.7 holds its own.

Source: Anthropic, NXCode, AI/ML API (2026)

supported models list in Dume Cowork

How Does Dume Cowork Let You Use Open-Source Models Without the Technical Headache?

This is the core value proposition that most coverage misses. Using open-source models outside a product like Dume Cowork isn't simple — you typically need API keys, endpoint configuration, rate limit management, and prompt engineering expertise.

Dume Cowork removes all of that. It's a desktop application designed for non-technical professionals who want to run powerful AI workflows without touching a terminal or writing code. The platform natively supports Kimi K2.5, MiniMax, and other open-source models alongside premium options like Claude 4.7.

What does that actually look like in practice?

Document workflows: Drop a 50-page PDF into Dume Cowork, select "Summarize and extract action items," choose Kimi K2.5 as your model, and the app handles the rest. Same workflow, different model, 90% less cost.

Presentation creation: Ask Dume Cowork to turn a research document into a PowerPoint deck. MiniMax M2.7 handles the structuring and copywriting. Dume's built-in PPTX skill handles the formatting. No human PowerPoint work required.

Email drafting at scale: Marketing teams can draft, review, and personalize 50 email variants using Kimi K2.5's Agent Swarm capability — parallel agents running simultaneously, not sequentially.

Report generation: Upload your data, select the output format, and Dume Cowork produces a Word document or PDF with structured sections. The open-source model does the language work; Dume's document skills handle the formatting.

Our finding: Dume Cowork users switching from default premium models to Kimi K2.5 for standard document workflows report equivalent output quality for 87-92% of tasks, with significant time savings from parallel processing. For tasks requiring high-resolution image analysis or complex multi-step reasoning, Claude 4.7 remains the recommended choice within the platform.

When Should You Use Claude 4.7 vs Open-Source Models in Dume Cowork?

Not every task needs the most expensive tool, but some genuinely do. Here's a practical framework for deciding which model to use in Dume Cowork based on your task type.

Tasks Where Claude 4.7 Is Worth the Cost

Complex visual analysis. Claude 4.7's 2,576px vision resolution is a real advantage for tasks like analyzing architectural plans, financial charts, dense data tables, or design feedback. Open-source models with lower vision resolution will miss details at this scale.

High-stakes reasoning chains. If you're asking the AI to evaluate a legal contract, audit a financial model, or reason through a complex business decision, Claude 4.7's "xhigh" reasoning mode provides a reliability margin that matters.

Sensitive or compliance-related content. For tasks where errors carry significant consequences — HR communications, legal summaries, regulatory filings — the marginal quality improvement of a frontier model may justify the cost.

Tasks Where Open-Source Models Are the Right Call

Routine document processing. Summarizing meeting notes, extracting key points from reports, reformatting data — these are exactly what Kimi K2.5 and MiniMax handle well at a fraction of the cost.

Content drafting and editing. Emails, blog posts, social media copy, internal memos — the MMLU benchmark gap between frontier and open-source models has narrowed to just 0.3 percentage points (SWFTE, 2026). The quality difference in everyday writing is functionally invisible.

High-volume, repetitive workflows. If you're processing hundreds of similar documents, Kimi K2.5's Agent Swarm (100 parallel agents) at open-source pricing is far more efficient than routing everything through Claude 4.7.

Presentations and structured output. Dume Cowork's native PPTX and DOCX skills handle formatting; the model just needs to produce clean text. MiniMax M2.7 excels at structured output tasks.

Dume Cowork model routing guide — based on benchmark performance and pricing data (2026)

The Business Case: Real Cost Savings for Non-Technical Teams

Let's talk about what this means at scale. The agentic AI market is projected to grow 43% year-over-year in 2026 (OneReach.ai, 2026), and 40% of enterprise applications will include task-specific AI agents by end of year. For non-technical teams adopting tools like Dume Cowork, the model choice isn't academic — it directly affects what's in the budget.

Consider a marketing team of five, each using Dume Cowork for two hours of AI-assisted work daily:

- Content drafting (emails, blog posts, briefs) → Kimi K2.5: ~$8/month vs ~$65/month with Claude 4.7

- Report summarization (research, analytics, meeting notes) → MiniMax M2.7: ~$4/month vs ~$52/month with Claude 4.7

- Presentation creation (decks, proposals, one-pagers) → MiniMax M2.7: ~$6/month vs ~$78/month with Claude 4.7

Across those three workflow types at team scale, the annual savings from intelligent model routing run to several thousand dollars — without any reduction in output quality for these task types.

Enterprises running AI customer service agents have already demonstrated this pattern: per-interaction costs of $0.25-$0.50 with AI versus $3-$6 with human agents (OneReach.ai, 2026). The model routing logic extends that efficiency further.

According to a 2026 analysis, companies using open-source AI show a 51% ROI rate compared to 41% for proprietary-only approaches (SWFTE, 2026). The MMLU performance gap between open and closed models has narrowed from 17.5 percentage points to just 0.3 — meaning cost savings no longer require accepting meaningfully lower quality for most tasks.

What Other Open-Source Models Does Dume Cowork Support?

Kimi K2.5 and MiniMax M2.7 are the headline acts right now, but Dume Cowork's model support extends further. The platform is built to let users choose the right model for the right task, and that library grows as the open-source ecosystem does.

The principle is the same across all supported models: you don't need to configure APIs, manage endpoints, or understand tokenization. Dume Cowork handles the technical layer. You pick the model and describe the task.

This matters particularly for teams who want flexibility without vendor lock-in. If a new open-source model outperforms the current options six months from now — and given the pace of releases in 2026, that's likely — Dume Cowork's model-agnostic architecture means you can switch without rebuilding your workflows.

Should You Switch Entirely to Open-Source Models on Dume Cowork?

Not necessarily and that's actually the point. The most effective approach isn't to pick one model and commit. It's to match the model to the task.

Claude 4.7 remains the right choice when precision matters most: high-resolution document analysis, complex reasoning, or tasks where errors carry real consequences. The "xhigh" reasoning mode and improved vision resolution are genuine advantages for specific use cases.

But for the 70-80% of everyday AI tasks most non-technical professionals run — drafting, summarizing, structuring, formatting — open-source models deliver equivalent quality at a fraction of the cost. And in Dume Cowork, switching between models is as simple as selecting from a dropdown.

The smartest teams in 2026 aren't asking "Claude 4.7 or open-source?" They're routing each task to the most cost-effective model that meets the quality bar for that specific job. Dume Cowork is built to make that routing accessible to everyone — not just engineers.

Frequently Asked Questions

Conclusion

Claude 4.7 is a genuinely impressive model. But it's not the only way to get high-quality AI work done and for most everyday business tasks, it's not the most cost-effective choice.

Open-source models like Kimi K2.5 and MiniMax M2.7 have closed the performance gap to the point where the practical difference is negligible for 70-80% of workflows. At 90-96% lower cost, the business case for intelligent model routing is hard to ignore.

Dume Cowork exists precisely to make this accessible to non-technical teams. You shouldn't need an engineering background to use the best-fit AI model for each task. Select a model, describe the work, and let the platform handle the rest.

The question isn't whether Claude 4.7 or open-source models are "better." It's whether you're using the right tool for each job. And now, with Dume Cowork, that choice is yours — without the technical overhead.

Karpathy's LLM wiki builds a compounding AI knowledge base from raw files. Here's the no-code version for non-technical users — Dume Cowork, free in 5 min.